Download and install latest version of VMware Player for Windows 64-bit operating systems if you are using Windows otherwise download VMware Player for Linux 64-bit Linux from link.; Download the latest version of Cloudera QuickStart VM with CDH 5.3 for VMware Or Download Cloudera QuickStart VM for Virtual Box.

This technical talk was given at the VMworld conference events in the US and Europe in the past few months. In case you missed it when it occurred live, we thought we would give you a recording of it here.

The joint talk (VIRT7709 at VMworld) was created and delivered jointly by members of technical staff from Cloudera and VMware in late 2016.

The Cloudera and VMware companies have collaborated for several years on testing and mutually certifying various parts of the Hadoop/Spark ecosystem on vSphere. This work actually began with the joint companies’ labs staff in 2011. From the creation of a set of reference architectures (two published by Cloudera on vSphere) to performance analysis and tooling, there are common points of interest that the companies continue to work on together. Key to the reference architectures are the familiar direct-attached storage model along with an external storage model for HDFS data that is based on Isilon technologies. Both of these have been tested and certified by Cloudera.

The speaker from Cloudera, Dwai Lahiri, highlights the detailed technical best practices from the reference architectures that apply to deploying Cloudera’s Distribution including Hadoop (CDH) on VMware vSphere. The VMware speaker starts by dispelling certain common myths about virtualizing Hadoop that are misguiding for someone who is new to the field. He then talks about the Hadoop core architecture and how it may be mapped into appropriately-sized virtual machines. A set of performance test outcomes are shown that demonstrate that Spark workloads run on VMware vSphere with equal performance to that of native – and in some cases even better than native, due to better memory locality handling by multiple virtual machines on host servers. Early impressions are given also of the collaborative work that the companies are doing together. This will give you a technical insight into the direction the two companies are taking in the big data space.

The agenda used in the talk follows the following sequence:

| 1 | Use Cases for Virtualizing Hadoop |

| 2 | Myths about Virtualizing Big Data |

| 3 | Hadoop Architecture on vSphere – an introduction |

| 4 | Overview of the Cloudera Portfolio |

| 5 | Reference Architectures |

| 6 | Cloudera CDH Performance Testing on vSphere |

| 7 | Innovations from Cloudera and VMware |

| 8 | Conclusions and Q&A |

In this post I will show how to install the Cloudera Quickstart VM on VirtualBox. I need a Hadoop cluster to try the examples in the Hadoop Book. Appendix A of the book describes how to install Hadoop. Though, there is also a hint to use a virtual machine (VM) which comes with a pre-configured, single-node Hadoop cluster. In particular the book refers to the Cloudera Quickstart VM.

Cloudera is a company which provides Hadoop-based software, support and services. The Cloudera Hadoop distribution is called ‘Cloudera’s Distribution including Apache Hadoop (CDH)’. Cloudera is for Hadoop similar to what RedHat or SUSE are for Linux. Please note that there are many other Hadoop-based offerings.

Step 1 – Download the VM: Download the Cloudera Quickstart VM here. At the time of writing this post, the Cloudera Quickstart VM is available for Docker, KVM, VMWare and VirtualBox. I have downloaded the VM for VirtualBox with CDH 5.7. The size of the zipped VM is about 5GB, so the download takes a few minutes. The Cloudera Quickstart VM needs to be extracted in order to import it into VirtualBox.Step 2 – Import the VM: VirtualBox is a free and easy to use hypervisor for x86 processors provided by Oracle. It runs on Linux, Solaris, OS X and Windows. Open the ‘File->Import Appliance’ menu of the VirtualBox GUI to import the extracted VM.

Browse to the directory where the VM was extracted, select it and press the ‘Open’ button.

Walk through the next panels without changing any settings. The last screen shows the configuration of the virtual server. Press the ‘Import’ button to import the VM.

It takes a view minutes to complete the import of the VM.

Step 3 – Start the VM: Next the VM needs to be started. Select the QuickStart VM and press the ‘Start’ button with the green arrow at the top of the VirtualBox GUI.

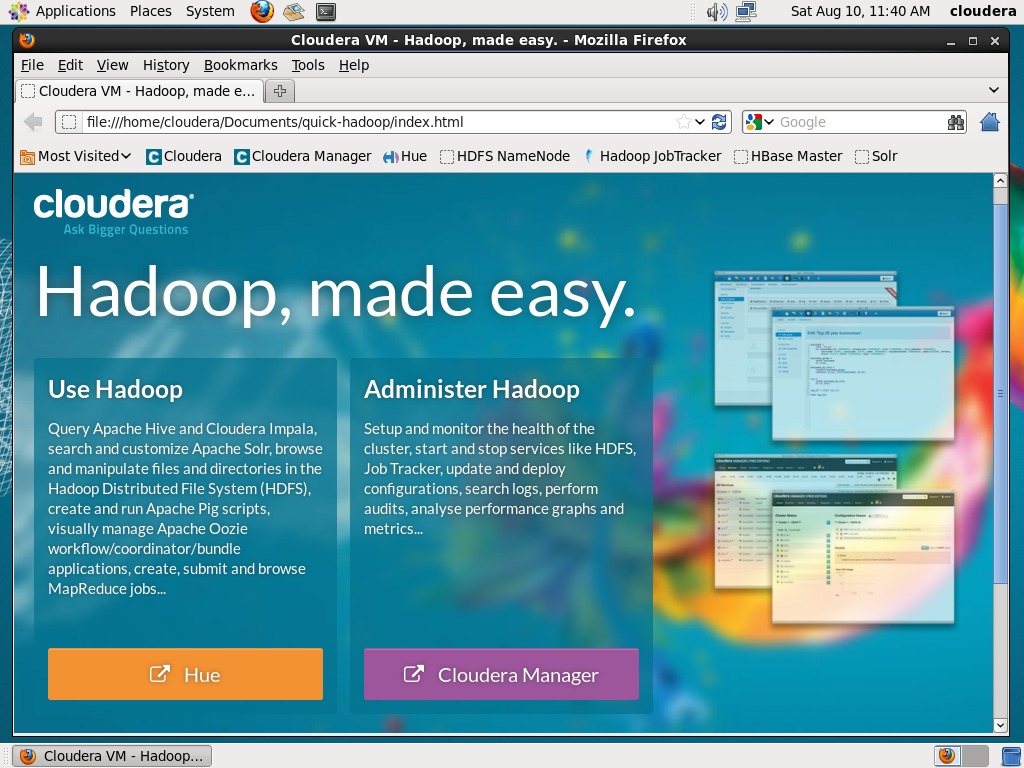

The booting of the Cloudera QuickStartVM takes some time, because a lot of processes will be started. Finally the Linux desktop appears and shows a webpage with Hadoop cluster status and instructions for the QuickStart VM. I skip the Cloudera tutorial, because I want to try the examples in the Hadoop Book. Maybe I look into the tutorial later.

Step 4 – Enabling SSH: I prefer to work on the VM via Putty and SSH. A port forwarding rule for SSH needs to be configured to allow SSH connections from my laptop to the virtual machine. Select the VM in VirtualBox and click on ‘Network’.

Expand the ‘Advanced’ options Press the ‘Port Forwarding’ button.

The next panel allows to specify port forwarding rules. Press the symbol in the upper right corner to add an additional rule. In my example I mapped port 2000/tcp of my laptop to port 22/tcp of the VM. Port 22/tcp is the default port for SSH. Click the ‘OK’ button to activate the new rule.

Step 5 – SSH login: I use Putty to SSH from my Windows laptop to Linux server. Open Putty, enter IP address (127.0.0.1) and port (2000). The port needs to be the same as the Host Port specified in the previous step. Press the ‘Open’ button to start an SSH session to the VM.

Putty opens an SSH session and I can easily log on as user ‘cloudera’ using password ‘cloudera’.

A quick test shows that Hadoop is ready to use. The description for classpath and credential seems to be mixed up. Thought this is what I got. I checked twice.

A quick test shows that Hadoop is ready to use. The description for classpath and credential seems to be mixed up. Thought this is what I got. I checked twice.

Ulf’s Conclusion

The Cloudera QuickInstall VM is indeed easy to install. I am happy that I now have a system, where I can try the exercises of the Hadoop book on my own.

In the next post I will explain the basics of MapReduce.

Changes:

2016/09/09 – added link – “basics of MapReduce” => BigData Investigation 4 – MapReduce Explained

2016/09/09 – added link – “basics of MapReduce” => BigData Investigation 4 – MapReduce Explained